Honored to be part of a Cisco’s “Engineers Unplugged” session

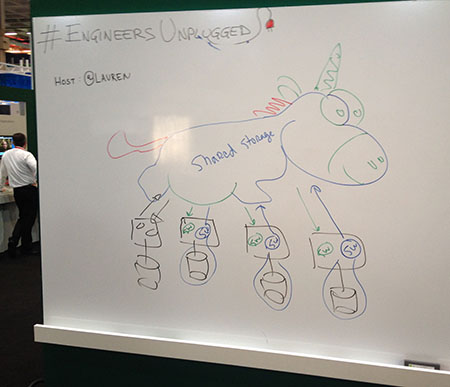

While visiting Cisco Live in Milan this week, I was honored to present at the Cisco’s “Engineers Unplugged” show. Together with my Italian college and personal friend Fabio Chiodini we drew up a relatively new architecture for shared storage.

In this session we showed how you can basically use servers with local storage attached to deliver shared storage out of a software layer.

There are many products out there today that use this storage architecture. During Engineers Unplugged we only described the architecture and didn’t really go into specific products.

This new approach of delivering shared storage purely out of software is some of the new magic that we are starting to see out there. As the trademark of Engineers Unplugged seems to be drawing unicorns, we decided to include a unicorn right into the presentation:

“From hippo to Unicorn… It is easily done in software!”

@Lauren: We beat you to it this time 🙂 Shared storage out of software is so much magic we felt the unicorn should

be there right from the start! Honored to have been allowed to present, honored to be bombarded to be a Cisco Champion for 2015! And no, I’m not insulted you initially thought my unicorn was a hippo before the drawing was finished 😛

The beauty of this storage architecture is that it takes only software and very standard hardware to build. Various implementations of thus storage architecture can be found, and they all have a slightly different approach which is actually a good thing; you can select the implementation that matches your requirements most.

Some software-only implementations

For VMware users the VSAN product may be the one that comes mind first when thinking about local storage being “upgraded” to shared storage. Some of VSANs coolest features include extreme simplicity, good performance and relatively a big freedom of sizing storage versus compute and memory.

ScaleIO from parent company EMC is another great example of this storage architecture needing only software and “Bring Your Own Hardware”. ScaleIO delivers incredible scalability, easily able to stretch over one thousand (!!) nodes if required, even if those nodes contain different OS’es and/or hypervisors.

But not all of these storage architectures come in a software-only form; many implementations actually include hardware platforms when delivered. Some small, some big and some scaling from small to very very big; there is something for everyone, that is for sure!

Some hardware-included implementations

Most of the implementations including hardware when delivered are hyper-converged. But not all; for example take a look at EMC’s Isilon; this product clearly uses the storage architecture we’re describing, and they are using this architecture to basically deliver one filesystem (called OneFS) that starts near the 100TB boundary and shoots up to over 20 PB if needed using a true scale-out model (so no hot spotting or federating multiple systems). Thanks to the scale-out nature of this architecture, Isilon scales out to hundreds of nodes delivering a true scale-out model for files (and HDFS by the way).

In the hyper-converged market we are seeing a lot of relatively young companies; companies like Nutanix and Symplivity have been around for a relatively short time but they are the ones that have introduced hyper-converged to the market: they addressed that was an ask in the market which was all-in-one boxes that are extremely simple to setup, more or less following a scale-out model and solutions that start very small. With the recent announcement from VMware around EVO:Rail VMware has opened the door for hardware vendors to easily create hyper-converged units that are super simple to setup and maintain thanks to VMware’s EVO:Rail software that sits on top.

Apologies to all vendors I may have forgotten that use the same storage architecture; I’m not trying to round-up all vendors in some shoot-out, but merely want to paint the picture around this specific storage architecture with some color by using examples that are relatively well known in the market.

LinkedIn

LinkedIn Twitter

Twitter