Performance impact when using VMware snapshots

It is certainly not unheared of – “When I delete a snapshot from a VM, the thing totally freezes!“. The strange thing is, some customers have these issues, others don’t (or are not aware of it). So what really DOES happen when you clean out a snapshot? Time to investigate!

Test Setup

So how do we test performance impact on storage while ruling out external factors? The setup I choose was using a VM with the following specs:

- Windows 2003-R2-SP2 VM (32 bit);

- Single vCPU;

- 2048 MB memory;

- 5 Gbyte boot drive as an independent disk on a SAN LUN;

- VM configuration and 4 GByte performance-measurement-disk on local storage (10K disks RAID1);

- IOmeter (version 2006.07.27) installed;

- Configure perfmon to measure both reads and writes per second from PhysicalDisk with a 20 second interval;

- Build these VMs on both an ESX3.5 and vSphere server to see any differences.

Note that the VM configs were also placed on local storage. This setup creates the situation that the measured disk lies on local storage, and the snapshot is also created there (without fiddling with custom lines in the *.vmx file). Because the bootdisk is an independent disk, it will not be snapshotted at all (thus not impacted during the test).

IOmeter was setup for a total of two tests. In the first test the delay between IOPS was set at 25 [ms], during the second test the delay was set to 0 [ms]. For both tests these workers were used:

| Worker name | Block size | Read / Write | Seq / Random | Nr of sectors | Resulting File Size |

|---|---|---|---|---|---|

| Writer | 4KBytes | 100% write | 70% random | 2.000.000 | 1 GByte |

| Reader | 4KBytes | 100% read | 70% random | 2.000.000 | 1 GByte |

Table 1: IOmeter setup

Both tests were performed using these steps:

- Boot the VM;

- Start perfmon logging (measure both read- and write operations per second (ROPS and WOPS);

- Delete the IOmeter test file and start IOmeter workload;

- Measure the IOPS performed without a snapshot in place;

- Stop IOmeter, delete its test file;

- Create a snapshot within VMware (do not snap memory);

- Restart IOmeter after the snapshot is created;

- Measure the IOPS performed with a snapshot in place;

- From VMware, select “delete snapshot”;

- Measure the IOPS performed until the snapshot is deleted;

- If the snapshot cannot be deleted, stop IOmeter then wait for successful snapshot deletion;

- Stop perfmon;

- Save the following data: VMware realtime stats for ROPS and WOPS on both a host and VM level, perfmon stats;

- Put all data from step 13 into one big Excel file.

How VMware snapshotting works

VMware has chosen to do its snapshotting exactly opposite to the way most SAN vendors perform snapshots. In VMware, all blocks to be written to the VMDK are actually written to a (growing) snapshot file. This snapshot file grows in increments of 16MB.

By using this type of snapshotting, the original virtual diskfile (VMDK) is not modified anymore, which makes it very easy to for example use some backup appliance to copy it out. Also, reverting the snapshot (going back to the original state) is easy; basically just remove the growing snapshot file because the VMDK file was left unmodified.

But who ever reverts snapshots on a regular basis? Most snapshots are comitted to the original VMDK afterwards (backup scenarios!). This poses a problem, because when you commit the snapshot (in VMware this is confusingly called deleting of a snapshot) you have got to read the entire snapshot file, and write all those blocks back into the original VMDK. This is what is thought to be the issue with freezing VMs while snapshots are committed.

Another problem in committing/deleting a snapshot within VMware is where to put the data that is written during the committing of the snapshot. One could of course just freeze the VM, commit all data from the snapshot to the original VMDK, then unfreeze the VM. This would be a far from acceptable solution though (a large snapshot takes a lot of time to commit). That is why VMware has come up with a solution: create a second snapshot…

How VMware snapshot deleting works

Let’s assume we have a VM with a snapshot. Now we delete the snapshot. So what happens?

First, VMware creates a second snapshot, which is a child of the first snapshot. All writes that the VM performs now, go into the second snapshot. The first snapshot is then committed to the base disk.

When the first snapshot is deleted succesfully, you end up with kind of the starting problem: a VM with a snapshot attached. Hopefully though, this second snapshot will be smaller than the previous one. VMware simply repeats this process, right until the one remaining snapshot is small enough (16MB at max from what I’ve seen). Then VMware freezes IO to the VM, commits the final snapshot, and unfreezes the VM. Because the snapshot was so small, the time the IO is frozen remains acceptable.

However nice this may seem, you might understand that snapshot deletion could actually fail if the VM writes too much data. In this case, the second snapshot grows faster than VMware itself can commit the first one to the base disk. This can actually mean your snapshot effectively will grow every iteration instead of getting smaller!

Test results: Overall read/write performance during snapshotting

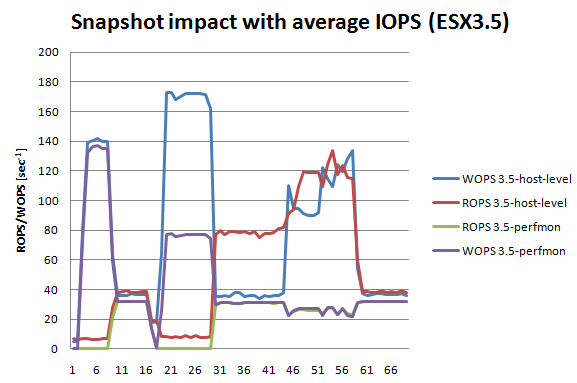

The first test turned out to perform just about 32 ROPS and 32 WOPS simultaneously, not saturating the RAID1 mirrorset of 10K SAS disks at all. Performance for both ESX3.5 and vSphere were almost equal, so I’ll just show the output for ESX3.5 here. The graph:

Graph 1: Average IOPS from a VM in conjunction with a snapshot

So what are we looking at here? Lets look at the different parts of the graph:

| Sample | Event / Action |

|---|---|

| 01 | IOmeter starts to create its testing file. We see a lot of WOPS now |

| 10 | Test file is created, IOmeter starts its 25[ms] interval performing ROPS and WOPS |

| 13 | Constant IOPS load around 32 WOPS and 32 ROPS |

| 17 | Here the VMware snapshot is added, then IOmeter is restarted |

| 21 | IOmeter creating its testfile. Again, heavy writes. Note that host-level WOPS are now twice as high* |

| 30 | Testfile created, IOmeter starts performing its IOPS |

| 36 | The VM still performs the requested WOPS and ROPS. Note that host-level ROPS are twice as high** |

| 43 | Delete snapshot is called from VMware. Immediately the ROPS and WOPS shoot up |

| 46 | Note that the number of IOPS to and from the VM remain at quite a steady level |

| 52 | Host-level WOPS rise even further. This has to do with the first snapshot being gone now*** |

| 60 | The snapshot is committed to the original VMDK. From now on, the VM performs exactly like before |

Table 2: Events and action related to Graph 1

Explanations:

*) The snapshot file is growing here. Obviously VMware needs twice as many writes to the filesystem in order to store all datablocks inside the empty (but now growing) snapshot.

**) When reading from a snapshot, VMware first has to find out if the block resides in the base disk only, or that an updated version of the block exists within the snapshot. This obviously requires twice the number of reads on the VM.

***) The initial snapshot (which is 1 GByte in size) is now cleaned from disk. All of a sudden, the WOPS increase even further somewhat. To my knowledge, this has to do with the snapshot size. Previously the snapshot size was 1GByte, causing a lot of random reads over a larger part of the physical disks. This accounts for the hard disk head having to seek over a larger portion of the platter, delivering less IOPS. After the big snapshot file is gone, only a very small snapshot file remains. Now we get closer to track-to-track seeks (almost sequential IO patterns) which have a smaller head seek time, thus increasing the number of IOPS the disks deliver (for more info on this read Throughput part 1: The Basics).

Some very important things can be extracted from this: For starters, the simple fact of having a snapshot to a VM means that all ROPS of this VM grow with a factor 2 (!). On top of that, when the VM writes a block that was not written to the snapshot before you also need twice the WOPS on that VM. This is due to the fact that VMware has to update the table of “where to find what block” (snapshot or base disk). This impacts performance significantly!

Secondly, the deletion of a snapshot simply shoots disk IOPS to the roof. In the test (look at samples 46..60) both the ROPS and WOPS went as high as the storage would go (there may be a clipped maximum to this, but that is beyond the performance of a set of 10K SAS drives in RAID1).

Performance impact on saturated disks

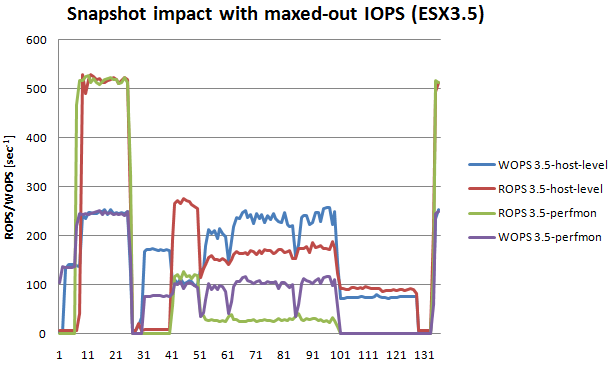

We have seen performance impact from using and deleting snapshots, but the impact on the VM itself was relatively small. So what happens if a set of disks is saturated by a VM and we start to use a snapshot? Check out the next graph:

Graph 2: Maxed-out IOPS from a VM in conjunction with a snapshot

In this graph the VM saturates the disk it runs on (sample 11-21). IOmeter still performs equal ROPS and WOPS, but here we see the disks manage to perform twice as much ROPS. This is probably because the disk set is a RAID1 which has a write penalty of 1:2 (also see Throughput part 2: RAID types and segment sizes). Around sample 26 the VM is snapshotted. From sample 31-40 the test file is created which IOmeter will use.

At sample 41 things get interesting; here we see the performance of the VM with a snapshot added to the VM. Because of the read overhead of having the snapshot, total performance here already drops by a factor 5 (!). At sample 51 the snapshot is deleted. Now VMware creates the second snapshot, and starts to commit the first snapshot into the base disk. At sample 61, we see a small glitch in IOPS; here the first snapshot is deleted, but the second snapshot has already grown too large. The result is, that snapshot number 3 is added to the VM while VMware commit the second one (since snapshot one was already comitted we effectively still have only two snapshots).

But now VMware gets into trouble: Every time a snapshot is commited, the second snapshot has already grown larger than the inital one. This means VMware will be unable to commit the snapshot. At sample 100 I stop IOmeter. The pruple and green line go to zero (the VM performs no more IOPS), and a little while later at sample 128 the snapshot is successfully deleted from the VM. After the delete of the snapshot I restart IOmeter at sample 130.

Performance impact on saturated disks in detail

Looking at the previous graph, you can extract the performance impact from it. To clarify, I created a graph with the perfmon output only:

Graph 3: Disk IOPS impact on a snapshotted VM

Here you can cleary see the performance from the VMs perspective. Remember, the VM is trying to perform as many ROPS and WOPS it possibly can. At the beginning, the VM performs an impressive 510 ROPS and 250 WOPS simultaneously (sample 7-25). Then at sample 30 the snapshot is added. After creating the IOmeter testfile, performance settles at sample 43-49 at about 110 ROPS and 100 WOPS. Note the difference!

Then I (try to) delete the snapshot at sample 50. Because the disks remain saturated, but VMware starts to throw in more reads and writes in order to commit the snapshot, overall IOPS remain the same but read takes another hit and falls all the way down to a poor 28 ROPS. If you compare this to the initial 510 ROPS, the VM ends up somewhere at 5.5% of its original read performance!!

What can be done about performance impact?

The big question of course, is how can we prevent this impact, and keep running our VMs even with a snapshot (or a snapshot being deleted)? Actually there are several things youi can do:

- Increase the performance of your LUNs by adding more disks to it;

- Measure and spread the IOPS over your LUNs so that busy LUNs get quieter and quiet LUNs get busier;

- Create your snapshot files on a different LUN, either by moving the VM configuration files to that LUN or adding a line to the VM config file (*.vmx) like:

workingDir=”/vmfs/volumes/Datastore1/vm-snapshots”

The last one is possibly the best one yet: You could actually create a separate LUN just to put your snapshots on! If you are a heavy user of snapshots (or your backup solution is), you could even consider to add two solid-state disks in a RAID1 config, and put all snapshots on those!

What about linked clones?

My colleague (thanks Simon!) did a quick test on our VMware View environment. A linked clone Windows XP image was used, from which IOPS were performed using IOmeter. The IOPS were then measured using perfmon from within the VDI desktop, and on a host level (an unused SAN LUN was used to put the linked clone on which was measured from VMware). It turns out that linked clones behave just like “normal” VMs with a snapshot: reads double, and writes double too if the block to be written is not part of the snapshot yet.

Conclusion

Looking at the measurements above, the conclusion is obvious: A VM can have a snapshot and even a snapshot deleted without impact. We have to keep in mind that the disks the VM lies on must be able to deliver much more IOPS than it does regularly. So the statement that deleting a snapshot freezes the VM is not true, at least not in the setup of test 1.

On the other hand, if a VM (almost) saturates a disk set IOPS-wise like in test 2, the impact of having and especially deleting a snapshot can be disastrous. In the test I managed to impact read performance of the VM to 5.5% of its original read performance!

Deleting snapshots can take a long time as we know. In the tests it became clear that the time required to commit the snapshot is not just about the size of the snapshot file, but also about the maximum number of IOPS the disks can deliver where the snapshot and the base disk lie. Even the write rate of the VM during snapshot committing impacts the required time to clean up significantly.

Not really visible in this blogpost, but I have run the tests on both vSphere and ESX3.5. The results were almost identical. In my tests, vSphere appeared to slightly faster on deleting the snapshot, and VM performance was impacted even less. Since the hardware both were running on were not 100% identical, I do not want to compare them too much; it is sufficient to state performance between ESX3.5 and vSphere is not significantly apart when looking at virtual disk snapshots.

Preventing impacted performance in conjunction with snapshotting has proven to be possible. Things like spreading VM load across LUNs (“storage DRS” for LUNs using storage vmotion would be nice!) or creating the snapshot files on a different or even dedicated LUN can help.

LinkedIn

LinkedIn Twitter

Twitter

[…] http://www.vmdamentals.com/?p=332 […]

very good write up on the snapshot problematic. Hope this will clear the mystery for many users.

In my time working as Technical Support Engineer for VMware the majority of the problems we had to solve where stopping or hanging VMs because of snapshots.

For example a guy made a snapshot of his Exchange Server (high I/0) and forgot to commit the changes back to the base disk and the VM was actually writing to the snapshot for the last couple of months and over time the delta file grew that big, that he ran out of space on the Data store until the VM actually stopped working.

VMware did not do a great job in explaining the user how snaphots work and what they do in the background and we had to go great lengths to explain to the customers how it works and told them rather not to use snapshots at all.

Some were using the snapshot for versioning, in Test and Dev departments and I have seen a VM with about 40! Snapshots on different levels in a snapshot tree. Committing those snapshots was sometimes impossible because the snapshot chain was broken and had to be manually corrected, descriptor file, by descriptor file and sometimes they were just lost, because the base disk was altered in the meantime. It was a mess and a massive headache for me having to fix this in a remote desktop session.

Also VMware’s naming convention for the different snapshot operations is very unlucky and highly confusing to the customer and they think when they select “Delete Snapshot” they will delete the delta file and basically revert back to the original state of the VM, and are not aware of the fact that this would actually commit all the changes back to the base disk and make the changes permanent.

I remember I had a discussion with a customer about all those options, Go To, Revert back, Delete, etc and after arguing with him for a while even I got confused. There was not a single official document that I could have referred the customer to that would have explained him (and me) the actual functionality of those options.)

I was hoping VMware would change this in vSphere4, but they didn’t. Obviously not enough feature/change requests were sent in by customers, or TAMs. I hope this one raises more awareness in the reigns of the VMware developers and they start to address the problems associated with snapshots.

David,

Thanks for your side of the story 🙂 I deliver jumpstart trainings sometimes, and snoop some extra time on snapshots by default. Very neccesary too, cause people tend to make snapshots for backup, for versioning, for just about everything and think it will actually help. I always encourage them not to use snapshots at all, and if they do, to NOT FORGET about them. Wrote a previous blogpost on that (Ye olde snapshot: http://www.vmdamentals.com/?p=76 ). In vSphere it got just a little better, because the provisioned space actually is calculated “as if” the snapshot has grown to be as large as the system disk (and that is a correct assumption, because it can). The naming convention simply is horrible. In ESX 2.5 this was much better, you could discard or commit a snapshot. Discard meant you loose the data (nowadays revert), commit meant apply the changes in the snapshot to the base disk (and then delete the snapshot). This is nowadays simply called delete. What???? So does delete mean you delete the snapshot? Well yes and no I tell people. The snapshot ends up getting deleted, but the data in the snapshot gets committed first. Very, very bad choice of words in my opinion. I would love to see the old terms coming back (discard/commit).

Excellent article!

Pingback derek858.blogspot.com

Excellent work…

[…] ist, dass mit der VM lebende Snapshots keine gute Idee für längerfristige Backups sind, einen nicht zu unterschätzenden Performance-Impact haben und im Zweifelsfall sogar zum Ausfall von VMs führen können. Die Liste von Stolperfallen mit […]

Best analysis of snapshots I’ve seen so far. In regards to your last comment about DRS for LUNs… its already here believe it or not. This company called Compellent has a technology called “data placement optimization” where they will “vmotion” your storage blocks to different luns based on IO.

In case you’re wondering, I dont work for them. I just was amazed when I came across their technology.

http://www.compellent.com/Products/Software/Automated-Tiered-Storage.aspx

@Walle: I know Compellent. They are migrating blocks of data between tiers of storage based on usage. It seems nice, but this is a whole other discussion. I bevlieve compellent can force VMs to stay on faster storage if needed, which is a definite requirement (“the database that comes alive once a month”. With virtualization, ÿou should not care where your VM runs”. That could be true for storage as well, but it is not. You never know what impacts what, I’d always want to know what load impacts what… It is still a nice approach from Compellent. Personally I like Suns 7000 storage even better; they use ZFS for filesystem which performs like crazy from sata alone, but they are using cache and SSDs in a VERY smart way. They’re the only that differ between read and write SSDs (and they have every reason). Be sure to check it out (and no, I do not work for Sun 🙂 )

Thanks for the write up! This is really goos stuff and should be linked from VMware communities.

Erik, how did you monitor IO at the ESX level? esxtop gives you good info, but I’m not sure how to extract the data for my own analysis on my environment.

@Jeremy: You can do all that with esxtop, and esxtop can run in batch mode outputting the data you require (-b I think). I used the VI client, the performance tab is also great, and it has a button that lets you save data either as a jpg, or as an Excel file. Especially the Excel option is great for this!

Excellent article. Now understand why my VMs having been getting slower and slower. I have been using snapshots as a versioning mechanism. I think the combination of terminology surrounding snapshots coupled with Snapshot Manager, which implies that complex trees of snapshots are ok, lead me and others down this path. Suffice to say I will not be using snapshots except as a one off safety net which get deleted (committed) immediately after the update. Thanks again.

We absolutely adore reading your site articles, the variety of writing is awesome.This web site as usual was academic, I have had in order to save your site as well as sign up for your feed within ifeed. Your own theme looks beautiful.

[…] Performance impact when using VMware snapshots […]

[…] at one of my older blogposts, I discovered I had already done my homework on snapshots: “Performance impact when using VMware snapshots“. In this post is a very clear conclusion on the impact of VMware snapshots on the underlying […]

Thank you for the excellent post / information. We use Veeam 5 to backup our VM’s including SQL, Exchange and file servers. Whenever a snapshot is created or released (especially when released), we experience interruptions in connectivity. Originally, I had thought that VMWare was locking the vmdk and all activity stopped. After reviewing this post and doing some of our own testing, it appears that the issue is network connectivity. While processing on the VM continues during the snapshot creation/release, network connectivity to that VM is interrupted on average once at creation and multiple times during the consolidation that occurs upon release.

I have tested using a separate dedicated LUN on internal 15k SAS drives, yet the interruptions always occur. While the faster/dedicated LUN reduces the number and overall length of the interruptions during release, they still occur – at least once at each point.

I don’t suppose you have any information related to that and how we might lessen this interruption?

Hi,

First I’d check if the same occurs when you just create / delete a snapshot from VMware. If the network isloation does not occur then, it’s probably a Veeam issue (maybe the OS freeze). If only VMware snapshotting causes the issue as well, I’d check the storage latency that occurs during creation, existence and especially deletion (= committing) of the snapshot. If the OS fails to perform disk I/O, freezes or observed freezes can occur.

This does occur when doing a ‘native’ snapshot, regardless of the disk subsystem or RAID config (Tested RAID 5 w/5 disks and a RAID 1 using 15k SAS).

I entered a support ticket with VMWare and the response I received was that this is accepted/expected behavior. Since a snapshot must quiesce the VM, the support rep stated that short interruptions in service must occur.

I tried to duplicate your testing, and while I/O continued within the VM, I/O to/from the VM was always interrupted at snapshot creation/release. I’ve also tested native VSS snapshots using Symantec BESR and did not experience the same interruption of service (on standalone servers and VMs). I do appreciate that BESR is not trying to freeze the entire OS, but a single disk subsystem, which may account for the difference?

I have yet to find a configuration that will allow us to create/release snapshots without this momentary interruption. In reading the Veeam forum and other blogs, I have seen some that agree with/accept the VMWare response as logical and others that indicate they do not experience any complete interruptions (slow down, sure, but not total interruption).

Anyway, thank you for your work on this blog – very informative.

TOPry , We have the same problem with vranger pro. We were hoping that moving to veeam would help. Can you tell me how you did your testing to notice that it was a network connectivity problem?

thanks

Jason,

There will be no difference whatsoever in the different backup solutions you use if they use VMware snapshots. Each of these solutions (PHDvirtual, Veeam, vRanger and many others) utilize the VMware snapshots. This implicates the issues described here.

If you want to solve your problems, then you should design your storage in such a way that you can handle the extra I/O requests. Yes, you NEED to consider VMware snapshotting in your storage design!

[…] Disk Alignment. For any pre-Windows 7, pre-Windows 2008 Server or older Linux based systems, your disks may be misaligned. Misalignment may cause quite a performance hit, especially when your storage underneath does not have a lot of IOPS to spare. You can read all about it here: Throughput part 3: Data Alignment. Apart from misalignment, using a lot of snapshots on your VMs may also cause quite an impact to performance. For more details you could look at: Performance impact when using VMware Snapshots; […]

[…] […]

[…] or basement to see if I can dig up some cool hardware to use this year. For example, the “Performance impact when using VMware snapshots” is something way overdue for a revisit under vSphere 5.0 or 5.1 […]

[…] […]

[…] I have been aching to do more cool deepdive stuff as well as some revisiting (for example – Performance impact when using VMware snapshots is dieing for a revisiting on vSphere 5.1 and vSphere .next – but a lot of […]

Have you ever considered publishing an e-book or guest authoring on other

blogs? I have a blog centered on the same ideas you discuss and would really like to have you share some stories/information.

I know my viewers would value your work. If you are even remotely interested, feel free to send me an email.

Somebody essentially assist to make significantly posts I might state. This is the very first time I frequented your website page and up to now? I surprised with the research you made to create this actual post amazing. Magnificent process!

Very good article.

I don’t leave comments often on forums, but this explanation has helped explain away in finer detail the performance issues I accidently created in our business VM infrastructure. Finding the cause was easy, fixing was easy, having a detailed reason why it happened was not. Thank you for writing this.

[…] It’s worth pointing out that keeping long running VMware Snapshots on ESX servers is strongly discouraged for production systems, and particularly discouraged if you have multiple snapshots. They incur a constant performance impact and they are the #1 cause of serious long term support issues. If you want some interesting reading on just how much performance impact they have you can read all about it in this VMDamentals article by Eric Zandboer. […]